Our benchmark comparison found that Ocient performed all hyperscale data queries faster than Snowflake, in most cases up to 10x faster, and in some specific cases up to 900x faster.

By Todd O’Brien, Technical Director of Professional Services at Ocient

At Ocient, we’re focused on helping customers deliver data solutions at hyperscale.

If you’re just starting the search for the right data solution for your business, the key first step is understanding the requirements of your workload. This includes how often your workload runs, the length of queries, and how much concurrency you’ll need. The answers to these questions will guide the type of technical architecture you need and, ultimately, your total solution costs.

Prior to Ocient, I spent years working in the data storage industry, partnering with customers in order to deliver the best possible solutions. In addition to storing data for reasons like archiving and compliance, I noticed that customers often wanted to run analytics tools against their data, for reporting purposes and to be able to answer specific questions about usage patterns.

My experience with customers running these analytic type workloads is that they would load a system with a constrained set of data (for example, all records from last month or a similar time range), run their analysis (which might take a few hours or days) and then shut the system down after sharing the learnings with a business stakeholder.

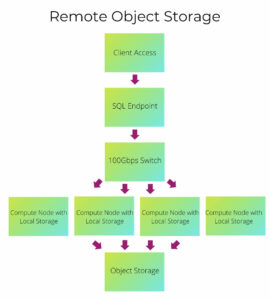

Remote Object Storage Architecture (ROSA)

If the above example describes your use case, a general-purpose data warehouse is your best option. These solutions, often cloud based, typically use remote object storage to host data on reasonably cheap storage, scaling compute resources as needed.

The Snowflake data warehouse is a popular example of this architecture.

Solution costs will be based upon the amount of data you keep in remote storage, with the addition a compute charge based upon the amount of time needed for the SQL processing. In the case of Snowflake, it provides an option to shut down the compute nodes after a query is completed.

If your query workload is targeted to an hour or two per day, you can run with very efficient use of compute resources based on the requirements of your workload.

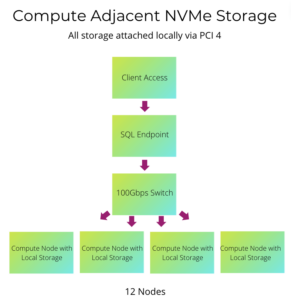

Compute Adjacent Storage Architecture™ (CASA)

If your workload is more demanding, it’s a different story. For example, the Ocient Hyperscale Data Warehouse has been designed to accommodate analytic loads that run continuously, 24/7, and need access to massive sets of data (100 TB+), sometimes loading on a continuous basis.

These types of use cases often demand SLAs which include the need to return results from detailed queries in an interactive time frame (in seconds to minutes). This type of SLA is extremely hard to meet using data warehouses based on remote object storage architecture (ROSA).

With Ocient’s Compute Adjacent Storage Architecture (CASA), data is stored on high performance NVMe drives at all times. This enables processors on compute nodes to directly access data over PCIe bus, eliminating any network bottlenecks, when compared to ROSA.

A Range of Options from Local NVMe / SSD Cache to an All-NVMe Architecture

Remote object storage architectures often use some form of fast SSD (generally NVMe) on compute nodes as a local data cache. The amount of local data cache for these systems can vary from very small (in the case of Snowflake), to the total size of the remote storage (in the case of Apache Druid). In other systems, such as Amazon Redshift AQUA, the size of the local data cache is somewhere in the middle.

When the remote storage fits entirely within the local data cache on the compute nodes, these systems will demonstrate performance similar to a CASA based architecture. However, when the remote storage exceeds the size of the local data cache, and the workload involves loading large amounts of data, these systems are forced to read from slower remote storage, causing a significant performance degradation.

A Simple Comparison

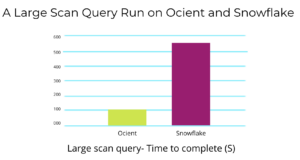

For comparison, we loaded a 256 TB dataset and schema on Ocient and Snowflake systems. We then ran performance tests using typical patterns used in data warehouse solutions.

An example of one of these patterns is a scan over an entire large dataset. In this example, the query performs a string function over a large quantity of data forcing the system to look at 115 billion records.

SQL used in our comparison:

SELECT COUNT(*), MIN(created), MAX(created) FROM auction WHERE RIGHT(auctionid,1) = '2'

As you can see in the chart below, Snowflake performed this operation in 9 minutes 2 seconds, whereas Ocient took 1 minute 38 seconds to run.

In addition to NVMe/SSD cache, many other factors will affect performance, when comparing query run times between systems. Some of the factors which give Ocient an additional edge here include: the ability to leverage secondary indexes, enhanced support for arrays and other complex datatypes, and optimized processing of certain SQL functions.

In Summary

When choosing an architecture to match your workload, one of the primary risks includes not being able to meet your performance goals. When this happens, there is often an attempt to expand (and oversize) your chosen system, in order to match compute resources with your performance needs. For this reason, ROSA based systems can often result in significantly higher costs when compared to a CASA based system, such as Ocient.

If you’re interested in learning more, you can read a detailed analysis of the comparison to Redshift here and our full Snowflake comparison paper here. You can also download a copy of our paper comparing the remote object storage architecture to Ocient’s compute adjacent storage architecture here.

And of course, we’d love to show you our solution in action, so please contact us to learn more or book a demo if you’d like to discuss ways Ocient can help you run complex, continuous analytics workloads at scale.